The Milgram Experiment: How ordinary people become capable of cruelty

With the end of the Middle Ages, many believed that humanity had entered a period of diminished violence and greater empathy in human relations.

However, the Modern Era emerged by expanding enslavement practices and culminating in events such as the French Revolution—one of the most violent episodes in history.

By the Contemporary Era, the First and Second World Wars ushered in a landscape of barbarism comparable to Aztec sacrificial rituals or the spectacles of the Roman Colosseum.

After World War II, agreements and treaties were signed between nations, carrying the promise that atrocities of such magnitude would never occur again without those responsible being severely punished.

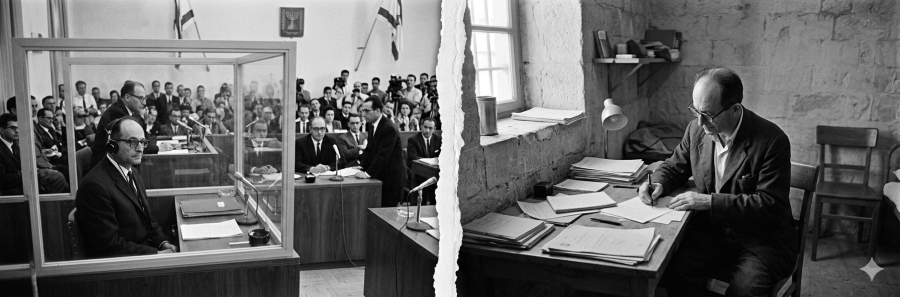

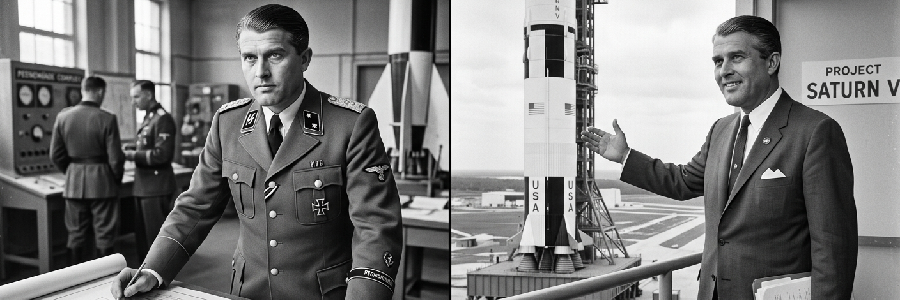

Yet, for a civilization that began to see itself as a peacemaker, one question remained: How was it possible that, in the heart of the 20th century, so many ordinary people—soldiers, doctors, politicians, lawyers, scientists, teachers—became cogs in systems capable of promoting barbarism such as concentration camps, the Ukrainian Holodomor, and the use of atomic bombs?

Governments, in times of war, played their part through massive propaganda, often dehumanizing enemy peoples or minority groups. However, to the keen observer, propaganda did not hide an essential fact: although nations were at war, their populations consisted of ordinary people—workers concerned with caring for their families and securing their livelihoods—with little decision-making power regarding State actions. Still, the execution of immoral projects depended on the adherence and participation of a significant portion of this mass.

The central question, therefore, was clear: Why do ordinary individuals collaborate in actions they would consider immoral under normal circumstances?

Auschwitz, Polónia

Auschwitz, Polónia

The Milgram Experiment

It was this very question that led Stanley Milgram to develop an experiment to test the hypothesis that atrocities do not necessarily stem from the specific characteristics of a people, but from a pattern of obedience to authority.

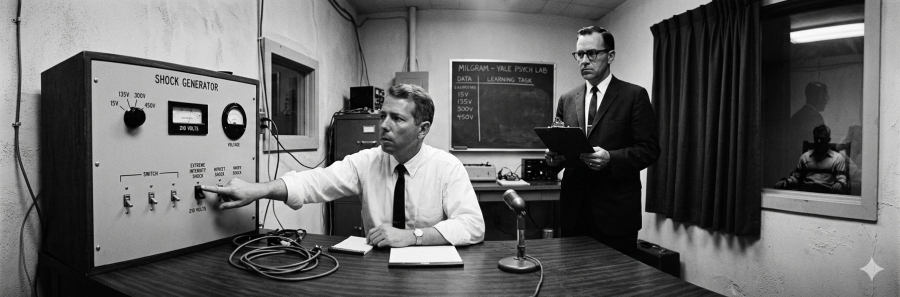

The experiment was simple:

- A volunteer was invited to participate in a study on memory and learning.

- In a rigged drawing, the volunteer assumed the role of the “teacher,” while another participant (an actor) was the “learner.”

- Both were placed in separate rooms, but the teacher could hear the learner.

- For every mistake the learner made, the teacher was instructed to administer an electric shock.

- The intensity of the shocks started at 15 volts and increased progressively, in 15-volt increments, until reaching 450 volts.

- The shocks were fake, but the participant did not know this.

- A researcher in a lab coat, present in the room, instructed the volunteer to continue.

The results were unsettling:

- Approximately 65% of participants administered the maximum level of 450 volts.

- Despite the learner’s pleas to stop and demonstrations of suffering, participants continued to follow instructions.

- Nearly 100% reached at least 300 volts, the point at which the “learner” stopped responding altogether.

- Many exhibited strong emotional discomfort—anxiety, fear, nervous laughter—yet they continued regardless.

- A few even showed signs of satisfaction in administering punishment.

Even when reluctant, the majority proceeded under the pressure of authority.

Variations of the experiment showed that obedience decreased when the researcher took off the lab coat or adopted a less formal demeanor. This suggests that the perception of authority—more than its actual legitimacy—is decisive: the more the researcher appeared to be an ordinary person, the lower the compliance.

What the Results Reveal

From the experiments, several key conclusions stand out:

- Obedience proved more deterministic than the participants’ personal principles and values: even if they considered the action wrong and immoral, the majority chose to obey a perceived authority and proceed with what they would never do on their own initiative.

- The appearance of authority proved more influential than the actual authority present in the experimental elements: a university setting, a formal posture, scientific attire, and a rigorous process were enough to create a framework of authority strong enough to prevent participants from acting on their principles.

- The transfer of responsibility to authority: participants attributed the blame for any harm resulting from their actions to the experiment’s conductors. Hence the phrase “I’m just doing my job”; in other words, even if it results in something immoral, the task was delegated to them by someone they consider the true party responsible for the consequences.

- The gradual escalation of penalties reduces moral resistance: in a variation where the tests began at 150 volts, participant obedience dropped significantly.

- Discomfort does not prevent obedience: even in the face of intense internal conflict, most participants continued the experiment to high shock levels.

- Environment influences significantly: the more elements signaled authority, the higher the compliance; conversely, when one participant began to disobey and this was observed by others, disobedience also increased.

Implications

In light of these observations, it becomes clearer how susceptible an individual is not only to established authority but also to collaborating in actions that, while violating their principles, are nonetheless carried out.

It is also observed in practice that the environment exerts a greater influence than is generally assumed and, even more concerningly, an artificial environment is often sufficient to produce it.

The fact that personal values exerted so little influence on these results serves as an important warning: perhaps these values were, for many participants, merely convenient veneers that, at the slightest sign of pressure, could not withstand a confrontation with a concrete situation.

The transfer of responsibility may be one of the most serious issues. Not infrequently, rulers create unjust laws against their own populations for their own benefit; however, these rules would be nothing more than words on paper if there were not an entire structure of individuals who firmly believe they must apply them—even if they contradict their principles and, at times, other laws—simply because they feel they must follow orders and are “just doing their job.” The possibility of disobedience in clearly immoral cases is often not even considered, and direct, personal responsibility for one’s acts is transferred to higher authorities.

The justification that someone is “only following orders” is frequently used for morally questionable actions. However, this logic is difficult to sustain: if taken to its extreme, it completely dilutes individual responsibility within any chain of command, regardless of the severity of the acts.

Milgram’s experiment (illustration)

Milgram’s experiment (illustration)

Final Considerations

Where principles hold strength only in the abstract, and where individual responsibility is transferred to third parties, authority finds fertile ground to advance—even in clearly immoral projects.

The average individual has never been as exposed to this risk as they are now, in a context where power structures—whether governmental or otherwise—are increasingly positioned above the population, equipped with technical and financial resources capable of exerting unprecedented control over society. The very nature of this power, often invisible and diffuse, makes it difficult to identify who, in fact, holds control and who is truly responsible for actions that result in harm to the majority of the population.

When this power is exercised by a government, the consequences can be catastrophic, resulting in wars, political persecution, and widespread human rights violations. In these circumstances, blind obedience to authority can lead ordinary individuals to participate in atrocities they would never commit on their own initiative.

However, even outside the governmental sphere, the phenomenon of obedience to authority represents a significant risk. In corporate environments, for example, employees may be compelled to participate in unethical or illegal practices under the pretext of “following orders” or “adhering to company culture.” The pressure to maintain employment or obtain a promotion can suppress critical thinking and moral responsibility, resulting in actions that harm others and violate the individual’s own values.

The diffusion of power in contemporary society creates an environment conducive to the erosion of individual responsibility. In an interconnected and technologically advanced world, it becomes increasingly difficult for the average citizen to understand how decisions are made and who is truly responsible for them.

References

- Stanley Milgram.

Obedience to Authority: An Experimental View (1974)